Fraud Detection

FPT AI eKYC employs advanced proprietary AI models combined with a Mobile SDK to detect and prevent a wide range of including spoofing attacks targeting both facial biometrics and identity document images. Two primary attack vectors are addressed:

- Presentation Attacks - detected and mitigated by AI models through recognition of spoofing indicators;

- Injection Attacks - prevented by the Mobile SDK via device-level security mechanisms.

1. Presentation Attack

a. Overview

A Presentation Attack occurs when an adversary presents a photograph, video, mask, or 3D model of another person in front of a camera in order to deceive a facial recognition system.

The defining characteristic of a Presentation Attack is that:

- The data processing pipeline remains intact;

- The camera operates normally;

- However, the input data has been manipulated - its authenticity is compromised before it ever reaches the camera sensor.

Examples:

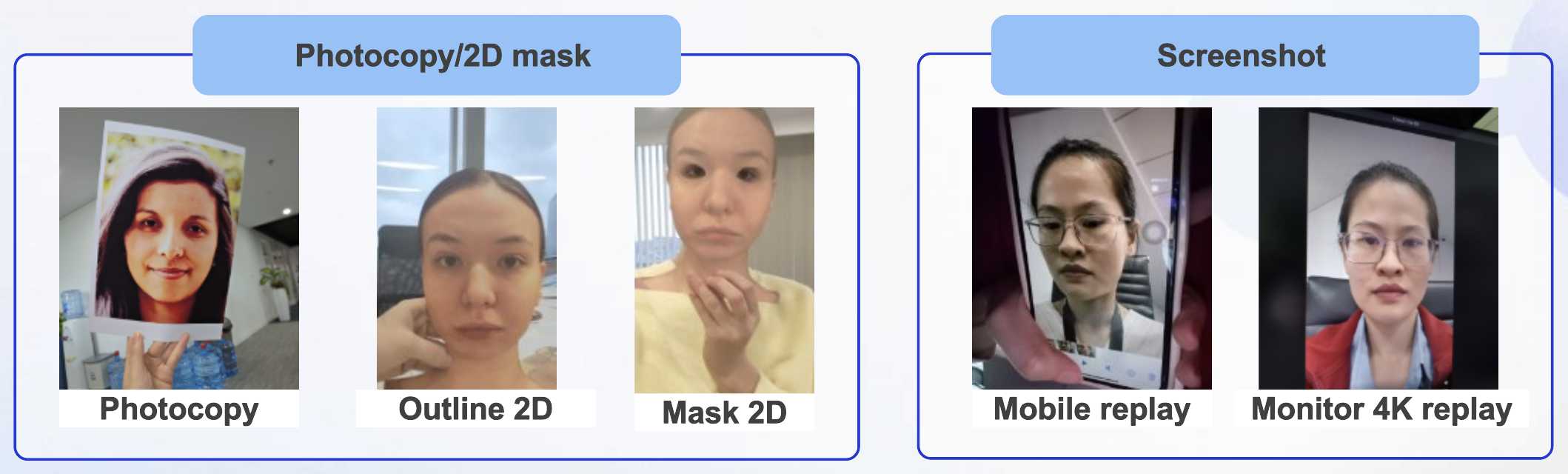

Facial Spoofing

2D Attacks:

- Printed facial photographs;

- Video replay on a digital device.

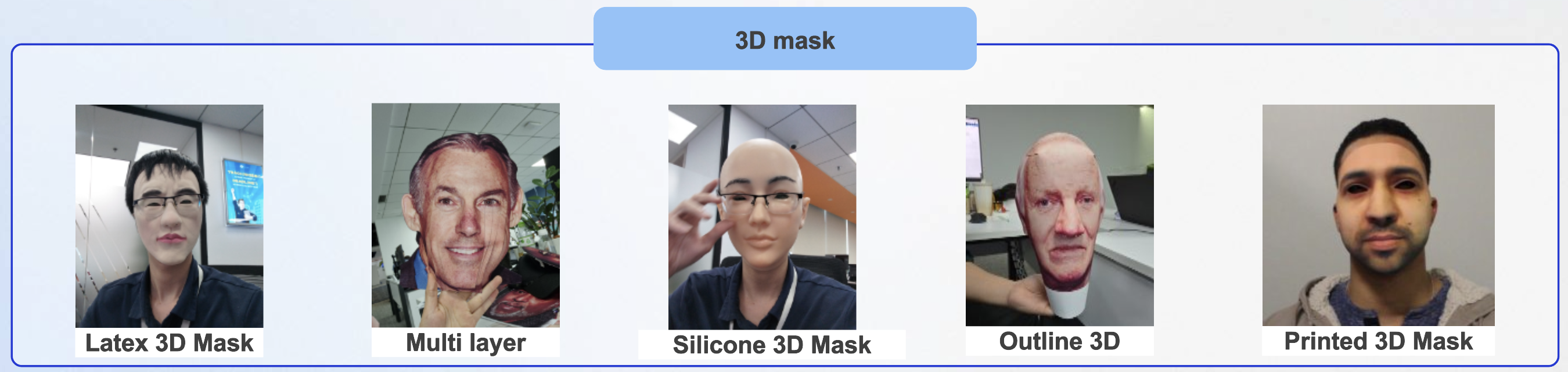

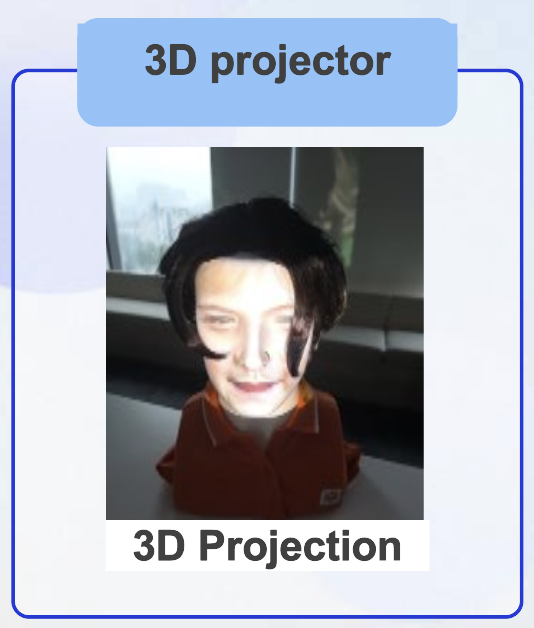

3D Attacks:

- Silicone or rubber masks;

- High-resolution projection onto a mannequin head.

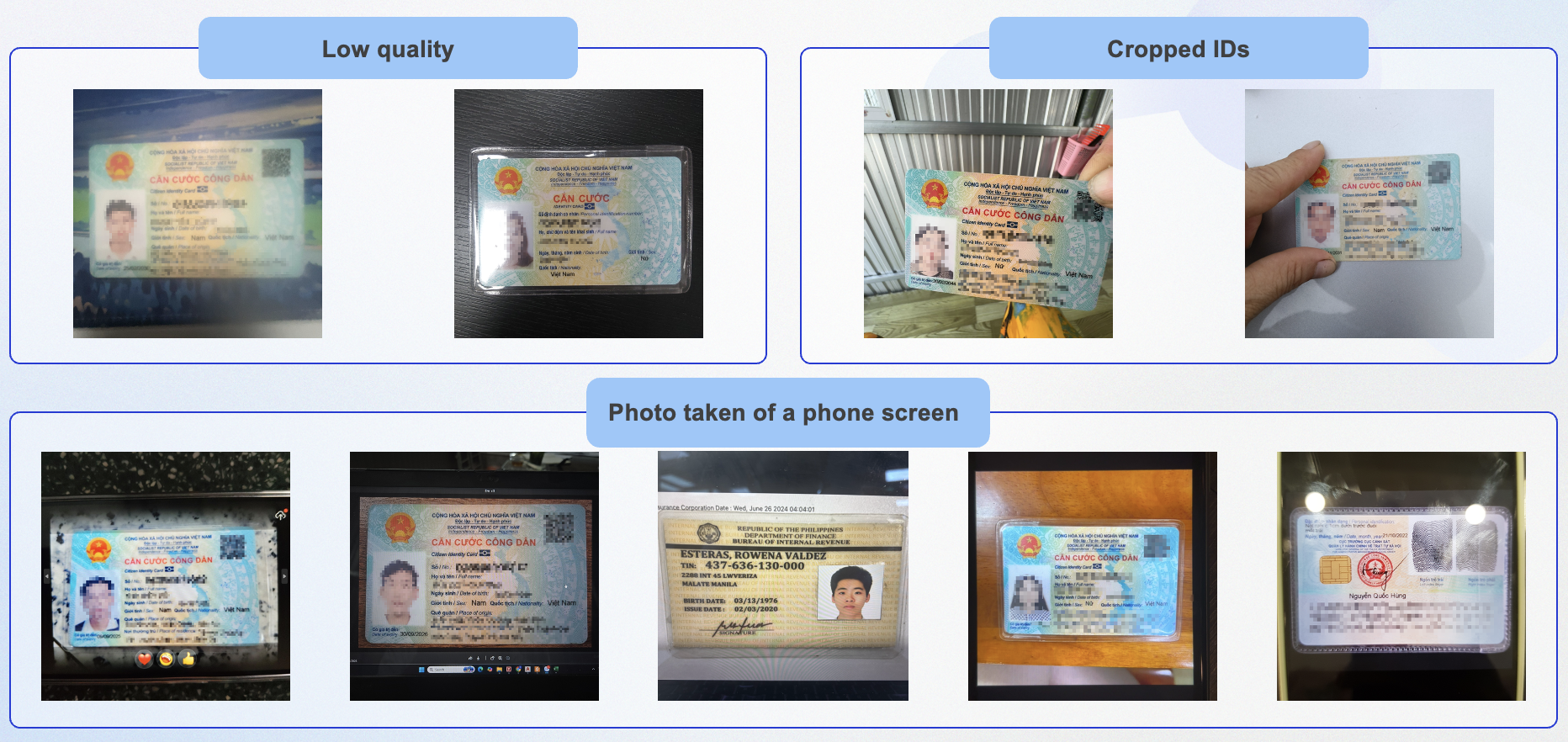

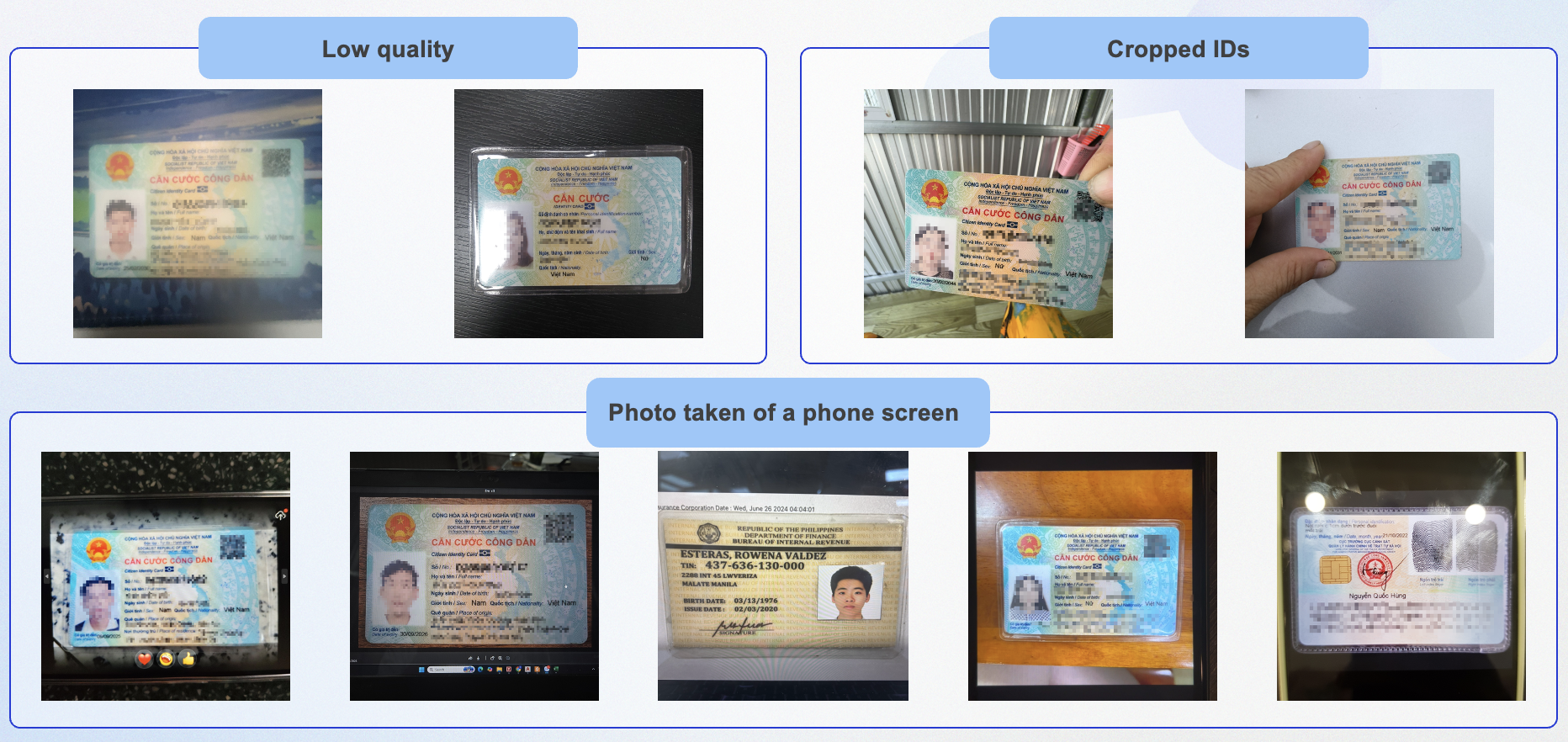

Identity Document Spoofing:

2D Attacks:

- Printed facial photographs;

- Screen recapture of document images.

3D Attacks:

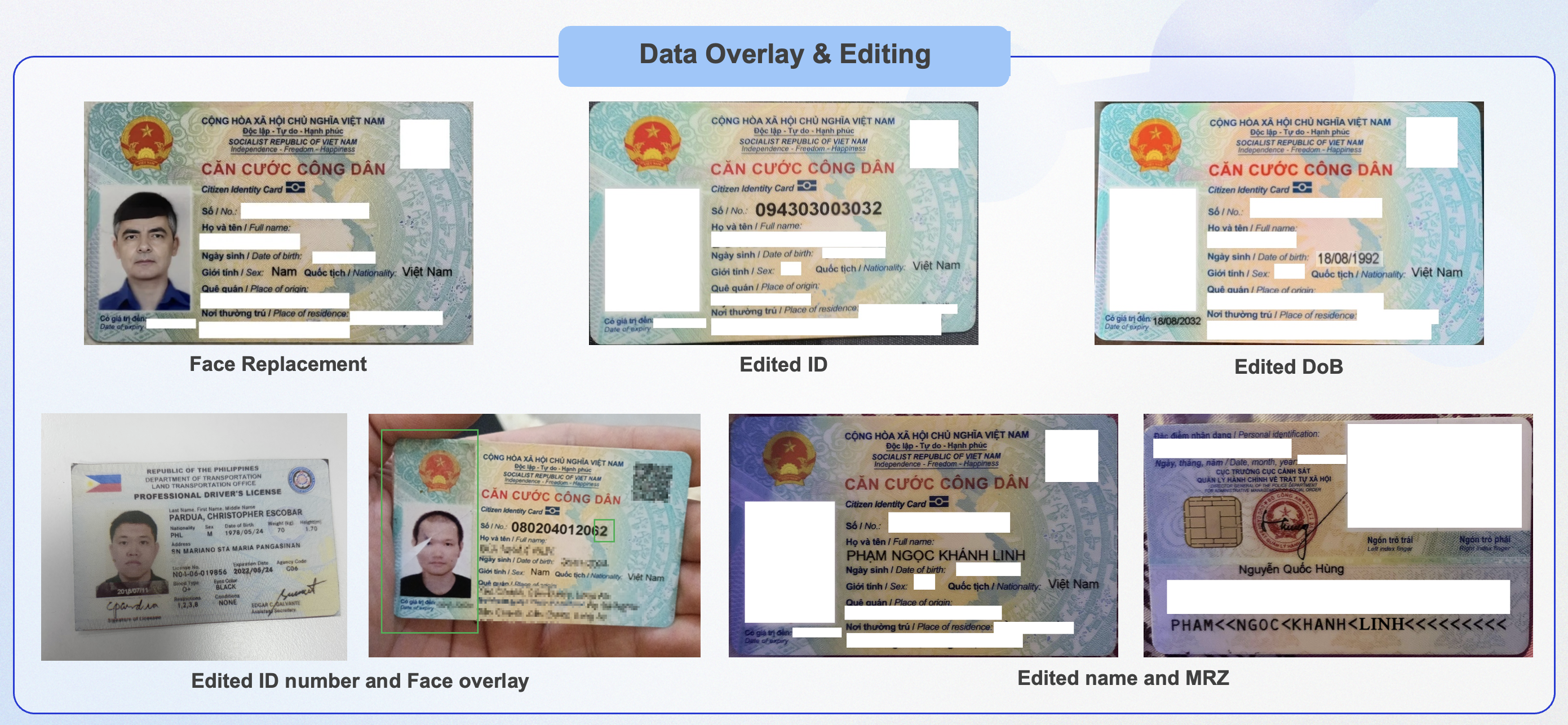

- Physical forged ID cards;

- Tampered or altered identity documents.

b. Threat Severity

These attacks are particularly dangerous because:

- They involve real-world photometric interactions (photometric consistency);

- They can defeat systems that rely solely on face matching;

- They are becoming increasingly sophisticated (e.g., high-resolution print attacks, deepfake video replay).

c. Countermeasures

Presentation Attack Detection (PAD) is primarily AI-driven.

For facial spoofing, detection is performed through:

- Texture analysis - identifies surface characteristics indicative of a screen or printed material;

- Liveness detection:

- Active - prompts the user to perform actions such as turning their head or blinking;

- Passive - analyzes micro-expressions and lighting cues without user interaction;

- 3D face reconstruction - distinguishes between a flat surface and a genuine three-dimensional facial structure;

- Flash Liveness Detection - exploits the physical interaction between light and facial surfaces to detect spoofing, by analyzing: facial depth and geometry; the reflective properties of human skin versus screens or printed materials; and differential light response across distinct facial regions.

For document spoofing, detection is performed through:

- Tampering Detection:

- Detecting signs of content manipulation (e.g., text or photo replacement/overlay);

- Identifying structural inconsistencies and visual artifacts in the image;

- Detecting synthetically generated documents (software-generated/fake documents).

- Security Feature Verification:

- Verifying fonts, layout, and standard document formatting;

- Validating security features such as holograms, patterns, and background elements.

- Image Quality Assessment:

- Evaluating sharpness, brightness, and contrast;

- Detecting blur, noise, or excessive compression;

- Detecting cropped images or missing document regions.

- Logical Consistency & Data Validation:

- Validating data format and structure (e.g., ID number, date of birth);

- Cross-checking consistency across data fields;

- Applying specialized rule-based validation.

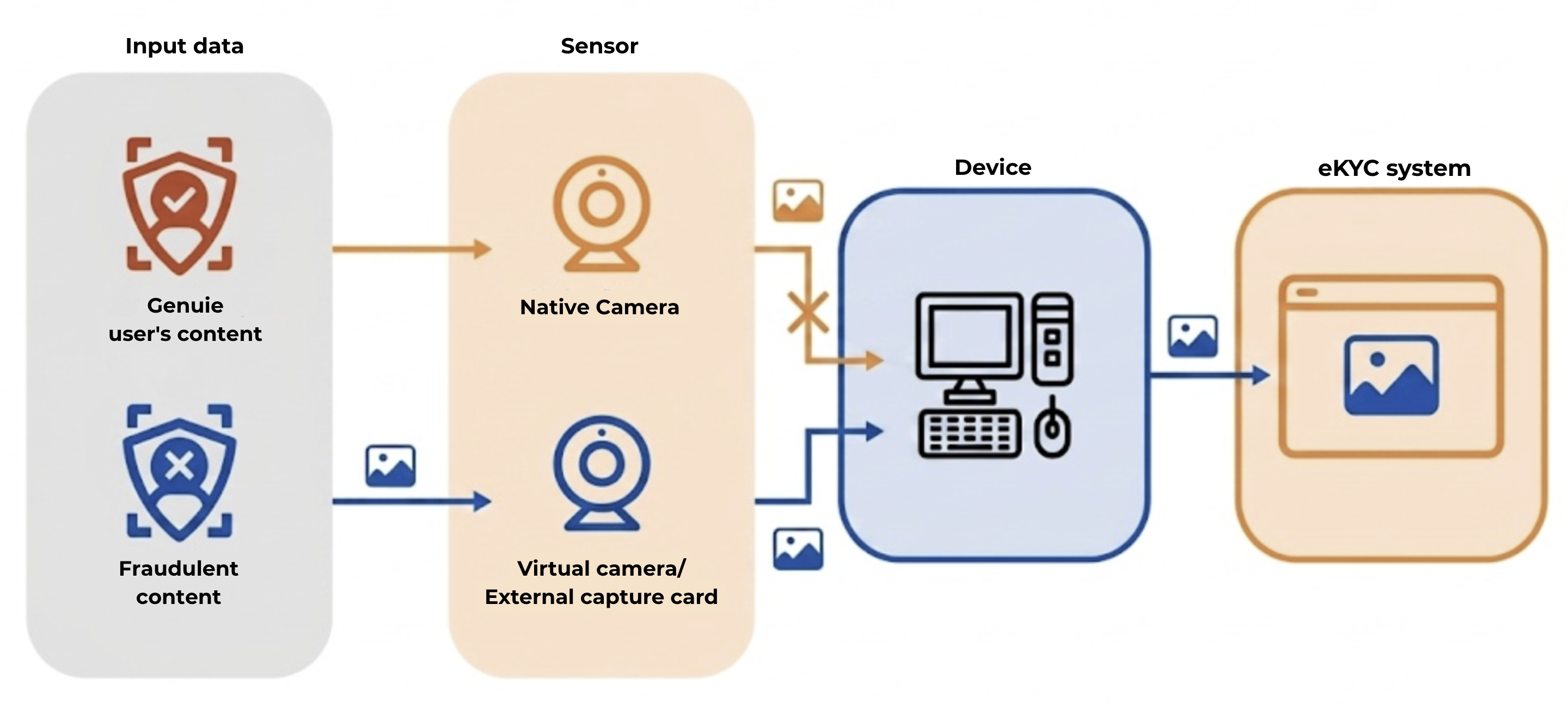

2. Injection Attack

a. Overview

An Injection Attack is a cyberattack in which an adversary directly intercepts the digital data pipeline, injecting fraudulent data into the system without any involvement of a real physical sensor (camera).

Unlike Presentation Attacks, Injection Attacks:

- Do not occur in the physical world;

- Instead, they operate at the application execution layer:

- Operating System (OS);

- App runtime;

- API layer.

Common techniques include:

- Virtual camera injection - substituting the real camera feed with a virtual one;

- API interception - overriding data returned by the camera API;

- File-based replay - injecting pre-recorded video or image files;

- Live deepfake streaming - streaming synthetic facial video in real time;

- Emulator or rooted device exploitation - leveraging compromised or simulated environments.

Examples:

b. Threat Severity

Injection Attacks are considered more systemically dangerous because:

- They can entirely bypass AI systems without needing to deceive them

- They are difficult to detect using image analysis alone - whether by AI models or by human inspection.

c. Countermeasures

Defending against Injection Attacks requires more than AI-based analysis - it demands a dedicated protection layer at the device level. This role is fulfilled by the Mobile SDK, which serves as a trusted security layer positioned between the camera and the backend.

The Mobile SDK enforces security mechanisms to ensure the integrity of the execution environment and the trustworthiness of input data, including:

- Device Integrity Verification - validates that the device has not been tampered with;

- Root/Jailbreak Detection - blocks devices on which security restrictions have been removed;

- Anti-Hooking & Anti-Debugging - protects the application from runtime interference and malicious code injection that could alter processing behavior;

- Virtual Camera & Emulator Detection - identifies the use of virtual cameras and simulated environments;

- Runtime Attestation - continuously monitors the application's runtime state to detect anomalies or signs of tampering.

Additional security measures at the mobile application level include:

- Legacy Device and OS Restrictions - limiting or discontinuing support for outdated OS versions that pose significant security risks;

- Mobile App Updates - encouraging users to update to the latest app version to benefit from the most current anti-tampering techniques for the camera pipeline;

- Other measures - including app shielding and detection of background processes that may present security risks.